Release Notes

- IBM© StreamSets as a Service

- IBM© StreamSets for Apache Spark

- IBM© StreamSets for Snowflake

March 2025

March 21

This release includes enhancements, a behavior change, and fixed issues.

Enhancements

- Kubernetes deployments

- You can configure a Kubernetes deployment to use full autoscaling. With full autoscaling, the Kubernetes agent automatically scales the number of pods that host engine instances based on selected metrics which can include CPU usage, memory usage, and a running pipeline count.

- Documentation updates

- With this release, Control Hub points to the IBM StreamSets Control Hub documentation on the IBM documentation website.

Behavior Change

- Update existing Kubernetes deployments to use full autoscaling

- Starting with the March 21, 2025 release, you can configure full autoscaling for Kubernetes deployments. In previous releases, you could configure legacy autoscaling only.

Fixed Issues

- When you navigate to the Sequences page and do not have the Job Operator role, you are incorrectly redirected to the Welcome page.

- You cannot copy and paste a stage in the pipeline canvas when you use Google Chrome, Firefox, or Microsoft Edge.

- The StreamSets API, including SDK usage, incorrectly allows you to create a job for a pipeline when you do not have read permission on that pipeline. With this fix, the API now requires that you have read permission on the pipeline, just as the user interface requires.

February 2025

February 19

This release includes several fixed issues.

Fixed Issues

- Editing deployments incorrectly requires the Environment Manager role.

- When you use the new pipeline canvas UI and you use a parameter for a connection property, Control Hub incorrectly displays the remaining connection properties rather than hiding the properties.

- When you use the new pipeline canvas UI, you cannot use a parameter in stage properties on the Test Origin, Start Event, or End Event tab.

- When you use the new pipeline canvas UI, properties that display as a field selector and accept multiple values incorrectly display the Used Parameter icon. You cannot use parameters in field selector properties that accept multiple values.

- You cannot edit a connection that is associated with Data Collector version 5.4.0 or earlier.

- When you edit a job that uses runtime parameters, the default parameter values are saved with the job as if you overrode the defaults. As a result, when the job is upgraded to a later pipeline version that uses new default parameter values, the job does not use the updated default values.

January 2025

January 22

This release includes an enhancement and several fixed issues.

Enhancement

- Kubernetes deployments

- In rare situations, a Kubernetes deployment can remain in an Activating or Deactivating state indefinitely. For troubleshooting purposes, you can force the deployment to stop and then manually delete any abandoned resources in the Kubernetes cluster.

Fixed Issues

- You cannot delete the current version of a pipeline if a draft run exists for that version.

- You cannot edit a connection that is associated with a custom engine build.

- When you preview a pipeline that includes a field with a time value of 0, the preview output displays no value for the field.

- You cannot create or edit connections unless you have the Deployment Manager role.

- When you select multiple jobs in the Job Instances view, and then click , the Upgrade Jobs dialog box lists only one job.

2024

December 2024

The following Control Hub releases occurred in December 2024.

December 13

This release includes several enhancements and fixed issues.

Enhancements

- Engineless connections

- In addition to standard connections, which are based on a selected authoring Data Collector in your organization, you can now create engineless connections. When you create an engineless connections, the connection can use any stage library associated with the latest Data Collector version.

- Sequences

- You now configure the step start condition for the step that you want to start or stop when the previous step is in error.

Fixed Issues

- When you switch between jobs using the global search in the toolbar, the job configuration continues to display property values for the previously viewed job.

- Offsets are not maintained when the previous job execution had no available engine.

- When you have more than 50 published fragments and you insert a fragment using the Add Stage icon from an open lane, Control Hub fails to insert the selected fragment if it is one of the most recently published fragments.

- When you import a pipeline and then change the authoring engine to Data Collector version 5.11 or later, Control Hub fails to assign the pipeline to the selected engine and displays a

Failed to load resourceerror message. - When you edit an active Kubernetes deployment and then immediately click Restart Engines, the engines fail to restart because the deployment is in the Activation state.

November 2024

The following Control Hub releases occurred in November 2024.

November 20

This release includes a fixed issue.

Fixed Issue

- When you monitor an active Data Collector job and view stage-related errors, the Errors tab displays no information and the page becomes unresponsive.

November 15

This release includes several enhancements and fixed issues.

Enhancements

- Pipeline configuration

- When you use the Insert Stage icon to add a stage between two connected stages, you can now add destinations and executors, in addition to processors and fragments.

- Sequences

- Sequences include the following enhancements:

- Run a sequence step - For troubleshooting purposes, you can manually run a step in a sequence. For example, if the first step in a sequence encounters an error that stops the sequence, you can correct the errors in the first step and then manually run the remaining sequence steps.

- Name a sequence step - You can add a name to a sequence step that informs your team of the step use case. You can also search for sequences by the step name.

- Kubernetes environments

- If you deactivate a Kubernetes environment but the Kubernetes agent is not gracefully shut down and deleted, you can now retrieve and run an uninstallation script to shut down and delete the unresponsive agent from the Kubernetes namespace.

Fixed Issues

- When you import a pipeline that uses a fragment that was renamed in a later version, the fragment incorrectly uses the original name from version 1 of the fragment.

- The Connections view continues to display the previous column name of Owner instead of Creator unless you clear the browser cache, reset the columns, or use a different browser.

- When you configure an Amazon EC2, Azure VM, or GCE deployment to provision EC2 or VM instances with the minimum 1GB of RAM required by an engine, the provisioned instances might unexpectedly crash when you run a job on the engine.

October 2024

The following Control Hub releases occurred in October 2024.

October 16

This release includes an enhancement and several fixed issues.

Enhancement

- Sequences

- You can add a finish condition to a sequence step to define when the job stops running.

Fixed Issues

- Some Control Hub REST APIs can cause unexpected behavior because the APIs no longer return the

unused

parentJobIdandsystemJobIdfields. For backwards compatibility, the APIs now continue to return these fields. - When network connection issues occur while a deployment is installing stage libraries on associated engines, the engines can fail to restart.

-

If you select a later authoring engine version for a draft pipeline that includes properties with empty values, Control Hub might generate the following error during pipeline preview:

Cannot read properties of null -

When you select a later authoring engine version for a draft pipeline and you choose to publish the draft pipeline when the engine version used to edit the pipeline is no longer available, Control Hub generates the following error:

HTTP Status: 404 (Not Found)

September 2024

The following Control Hub releases occurred in September 2024.

September 25

This release includes an enhancement.

Enhancement

- IBM ID

- Users can log in with an IBM ID, in addition to the existing options.

September 18 and 20

This release occurred on September 18, 2024 for the eu01.hub.streamsets.com instance. The release occurred on September 20, 2024 for all other instances.

Enhancements

- Sequences

- You can use the following new properties to search for

sequences:

- Job Name - Search for sequences that include the specified job name.

- Created By - Search for sequences created by the specified user email address.

- Last Modified By - Search for sequences last modified by the specified user email address.

- Data Collector pipeline failover

- When a Data Collector engine gracefully shuts down, Control Hub typically considers the engine unresponsive within one minute. As a result, if a currently active job is enabled for pipeline failover, Control Hub usually restarts the pipeline on another available engine within one minute.

Behavior Change

- Deprecated parameters in Control Hub REST API

- The

withWrapperparameter used in the/jobrunner/rest/v1/saql/jobs/search/{jobId}/runsREST API has been deprecated and will be removed in a future release.

Fixed Issues

- When you delete a pipeline, Control Hub does not automatically delete the draft run created for that pipeline.

To manually delete a draft run for a previously deleted pipeline, click in the Navigation panel, select the draft run and then click the Delete icon.

- When you import a pipeline with a commit message larger than 255 characters, Control Hub displays an incorrect error message.

- The generated installation script for a Transformer Docker image for a self-managed deployment always uses the default port 19630, even when you configure a different port number in the Transformer configuration properties.

August 2024

The following Control Hub releases occurred in August 2024.

August 16, 2024

This release includes several enhancements, behavior changes, and fixed issues.

Enhancements

- Subscription parameters

- You can use the JOB_TAG parameter for a subscription triggered by a job status change.

- Connections

- In the table of connections in the Connections view, the Owner column has been renamed to Creator to clarify that the column displays the user that created the connection.

Behavior Changes

- Engine credential store properties for existing deployments

- Existing deployments that define sensitive data such as a password for credential store properties in the engine advanced configuration now display the sensitive values as REDACTED.

- Deprecated parameters in Control Hub REST API

-

The following parameters used in several

jobrunnerandpipelinestoreREST APIs have been deprecated and will be removed in a future release:systemsystemjobincludeSystemJobStatus

Previously, setting these parameters to

truereturned no pipelines or jobs because system pipelines and system jobs do not exist in platform Control Hub. With this release, Control Hub ignores these parameters and returns all pipelines or jobs meeting the remainder of the API call parameters.

Fixed Issues

- A subscription triggered by a job status change event that does not use the JOB_OWNER parameter in the email action or event condition fails to send an email when the job owner no longer exists.

- Runtime parameters do not display in the job instance details.

- The advanced organization properties might display uneditable IP Auth Rules properties configured with test values.

- When configuring an engine to use a proxy server, the

http.nonProxyHostsproperty defined on the Proxy tab does not always take effect.

July 2024

The following StreamSets platform releases occurred in July 2024.

July 24, 2024

This release includes an enhancement and several fixed issues.

Enhancements

- Sequences

- You can view the history and errors for all runs of a sequence. When viewing all history or errors, you can search for historical log messages or errors by date. You can also delete log messages and errors for a sequence.

- Product rename

- Following the IBM acquisition of StreamSets, platform Control Hub is part of what is now known as IBM StreamSets.

Fixed Issues

- Users with the Job Operator and Pipeline User roles receive an HTTP 403 forbidden error when accessing the Job Instances and Job Templates views.

- After installing a stage library from the pipeline canvas and then restarting the engine, the stage library panel still displays the newly installed stage as disabled.

- Importing pipelines using the Control Hub REST API

/v3/importer/{schVersion}/pipelinedoes not set the pipelineCommitID in the rules.

July 11, 2024

This release includes a fixed issue.

Fixed Issue

- If you are required to verify your email when you accept an email invitation to join an existing organization, you are prompted to create a new organization rather than join the existing organization.

June 2024

The following StreamSets platform releases occurred in June 2024.

June 26, 2024

This release includes several enhancements, behavior changes, and fixed issues.

Enhancements

- Pipeline and fragment import

- When you import a pipeline or fragment, you select the file to import and then the import dialog box automatically detects the file type based on your selection, either an archive ZIP file or a JSON file.

- Kubernetes environments

- Kubernetes environments support a new KUBERNETES_2024_06_14 feature version which adds startup and liveness probes to all deployments belonging to the environment.

- Self-managed deployments

- Self-managed deployments include the following enhancements:

- Engine installation type - The deployment wizard for self-managed deployments has been simplified. Instead of displaying a separate Install Type step, the wizard now has you choose the engine installation type, either a Docker image or a tarball file, in the Review & Launch step.

- Proxy server configuration - For self-managed deployments using a Docker image installation of Data Collector 5.11.0 or later, Transformer 5.8.0 or later, or Transformer for Snowflake 5.2.0 or later, you can more easily configure the engine to use a man-in-the-middle proxy server. When configuring the deployment, you can include the custom certificate required by the server in the engine advanced configuration properties.

- Engine Java version defined for deployments

-

The engine Java version defined for deployments includes the following enhancements:

- Java version for self-managed deployments using a Docker image -

When you configure a self-managed deployment using a Docker

image for Data Collector 5.10.0 or later, you now define the Java version in the engine advanced

configuration properties.

Previously, you defined the Java version in the Review & Launch step of the deployment wizard when creating the deployment, or in the Install Engine Script dialog box when retrieving the installation script for an existing deployment.

- Amazon EC2 and GCE deployments - When you configure an Amazon

EC2 or GCE deployment for Data Collector 5.11.0 or later, you can define the Java

version for the engine to run on.

In most cases, you can use the default Java version. Some Data Collector stage libraries and use cases require specific Java versions.

-

Default Data Collector Java version - When you configure a Control Hub-managed deployment or a self-managed deployment using a Docker image for Data Collector 5.11.0 or later, Control Hub now deploys and installs Java 17 by default.

For earlier Data Collector versions, Control Hub deployed and installed Java 8 by default.

- Java version for self-managed deployments using a Docker image -

When you configure a self-managed deployment using a Docker

image for Data Collector 5.10.0 or later, you now define the Java version in the engine advanced

configuration properties.

Behavior Changes

- Table columns reset to defaults

- With this release, all resized, reordered, and hidden table columns are reset to the defaults.

- Engine log file location for Amazon EC2 or GCE deployments of Data Collector 5.11.0 or later

- With this release, the engine

log file for Amazon EC2 or GCE deployments of Data Collector 5.11.0 or later is located in the

/logsdirectory.

Fixed Issues

- The pagination buttons in the Scheduled Tasks view are greyed out even when there are more scheduled tasks to display.

- The pipeline canvas fails to check in a pipeline when an input field is focused before you click the Check In icon.

May 2024

The following StreamSets platform releases occurred in May 2024.

May 22, 2024

This release includes several enhancements and fixed issues.

Enhancements

- Connection upgrades

- When you edit a connection and choose an authoring Data Collector of a later version that includes changes to the connection properties, you can now choose to upgrade the connection to use the changed properties. Alternatively, you can choose to create a copy of the connection and then upgrade the copy to use the changed properties.

- Sequences

- Sequences include the

following enhancements:

- When you view the details of a sequence, you can click the name of a job to navigate to the job details.

- The Enable Sequence and Disable Sequence icons now include a label to clearly indicate the purpose of the icon.

- Banner notifications

- You might now see notification messages in the top banner letting you know about important information about the StreamSets platform. After reading the messages, and taking any appropriate action, you can dismiss the messages.

Fixed Issues

- Existing job tags do not display when you edit a job from the Job Instances list.

April 2024

The following StreamSets platform releases occurred in April 2024.

April 26, 2024

This release includes several new features, enhancements, and fixed issues.

New Features and Enhancements

- Sequences

- You can create a sequence to run a collection of jobs in specified order based on conditions.

- Search

- You can use the following new properties to search for pipelines or

fragments:

- Stages - Search for pipelines or fragments that include the selected stage. For example, you might search for all pipelines that include an Amazon S3 stage.

- Stage Libraries - Search for pipelines or fragments that use stages included in the selected stage library. For example, you might search for all fragments that use stages included in the Apache Kafka 3.1.0 stage library.

- Kubernetes deployments

- When you configure a Kubernetes deployment for Data Collector 5.10 or later, you can define the Java version for the engine to run on.

- Engine credential store properties defined for deployments

- When you enter sensitive data such as a password for credential store

properties in the engine

advanced configuration, Control Hub displays the sensitive values as

REDACTEDafter you save the deployment. - Stage library mode defined for deployments

- For advanced use cases, you can now configure a deployment to use the user-provided stage library mode where you provide the stage library files during the engine installation. For example, you might use the user-provided stage library mode if your organization requires that the stage library files be scanned for security purposes before they are installed on the engine machines.

Fixed Issues

- A deployment configured to use a proxy server ignores the

http.nonProxyHostsproperty when it includes a CIDR block. - You cannot export a pipeline that includes a connection specified as a parameter.

March 2024

The following StreamSets platform releases occurred in March 2024.

March 20, 2024

This release includes an enhancement and several fixed issues.

Enhancement

- Deployment Java version

- When you configure an Azure VM deployment or a self-managed deployment using a Docker image for Data Collector 5.10 or later, you can define the Java version for the engine to run on. In most cases, you can use the default Java version. Some Data Collector stage libraries and use cases require specific Java versions.

Fixed Issues

- Clicking the Last page icon in the Scheduled Tasks view displays the next page of results instead of the last page.

- Clicking Show Diff for a cloned deployment of a Transformer engine does not display the differences between the original and the cloned deployment.

- Scheduled tasks sporadically fail to start, sometimes displaying a

Connection is closederror in the run history. - A subscription that sends notifications when the job status changes to red incorrectly triggers for Transformer for Snowflake jobs.

February 2024

The following StreamSets platform releases occurred in February 2024.

February 21, 2024

This release includes an enhancement and a fixed issue.

Enhancement

- Job run history

- When you view the run history of a job and expand the details of a run, Control Hub displays a last-saved offset only when the offset contains information. When the offset is empty, no offset is shown.

Fixed Issue

- When you search for jobs by Engine Label, auto-completion loads indefinitely.

February 7, 2024

This release includes an enhancement and a fixed issue.

Enhancement

- SAML identity providers

- When configuring SAML authentication, the Microsoft Azure Active Directory (Azure AD) identity provider has been renamed the Microsoft Entra ID identity provider to match the Microsoft rebranding.

Fixed Issue

- When you sign in to the StreamSets platform with email, Google, or Microsoft after having previously signed in with a

different option, you might encounter the following error:

The requested action is invalid.

January 2024

The following StreamSets platform releases occurred in January 2024.

January 24, 2024

This release includes an enhancement and several fixed issues.

Enhancement

- AWS environments

- In addition to manually configuring the AWS credentials that Control Hub uses to access and provision resources in your AWS account, you can have StreamSets automatically configure a cross-account role for you.

Fixed Issues

- External resources do not work when stored in a subfolder in a private Google Cloud Storage bucket.

- Sorting scheduled tasks by the next execution time sorts the tasks in the current page only rather than all of the tasks.

- When Data Collector 5.7.0 and later or Transformer 5.6.0 and later are configured to use a proxy server, wildcard domains

specified in the

http.nonProxyHostsproperty are not correctly evaluated.

January 4, 2024

- Pipelines intermittently do not display in the pipeline canvas.

- Jobs intermittently generate an error as you attempt to save the job, and then do not save.

2023

December 2023

The following StreamSets platform releases occurred in December 2023.

December 20, 2023

This release includes several enhancements and fixed issues.

Enhancements

- Search

- Search includes the

following enhancements:

- You can use the new Pipeline ID property to search for pipelines or fragments by the pipeline ID.

- The ID property available for pipelines and fragments has been

renamed to Commit ID to clarify that you use the property to search

for pipelines or fragments by the commit ID.

Existing saved searches for pipelines or fragments that use the previous ID property name continue to work and find pipelines or fragments by the commit ID.

- Environments

- When you create an environment, you select the feature version to use for that environment and for all deployments created for the environment.

- Deployments

- Deployments include the following enhancements:

- Cloning deployments -

Clone a deployment to quickly create another deployment with similar

configurations. You can select the same engine version or a later

engine version than used in the original deployment.

You might clone a deployment as the first step in the engine upgrade process. When you edit a cloned deployment that uses a later engine version than the original deployment, you can view and compare the engine configuration differences between the original and cloned deployments.

- Amazon

EC2 deployments - You can configure an Amazon EC2

deployment to provision EC2 spot instances, in addition to on-demand

instances. Requires the AWS_2023_12_15 environment feature

version.

Amazon EC2 deployments that use the initial feature version provision EC2 on-demand instances only.

- Azure VM

deployments - An Azure VM deployment for Data Collector

5.8.0 and later deploys the Data Collector engine as a tarball rather than a Docker image.

Azure VM deployments for earlier Data Collector versions and for Transformer continue to deploy those engines as a Docker image.

- Cloning deployments -

Clone a deployment to quickly create another deployment with similar

configurations. You can select the same engine version or a later

engine version than used in the original deployment.

- Organization security

- The primary organization administrator can designate another user assigned the Organization Administrator role to be the primary organization administrator.

Fixed Issues

- You cannot export a draft pipeline or fragment using the Export option that

removes plain text credentials.

- You cannot import a draft pipeline that includes a fragment.

November 2023

The following StreamSets platform releases occurred in November 2023.

November 15, 2023

This release includes several enhancements, a behavior change, and fixed issues.

Enhancements

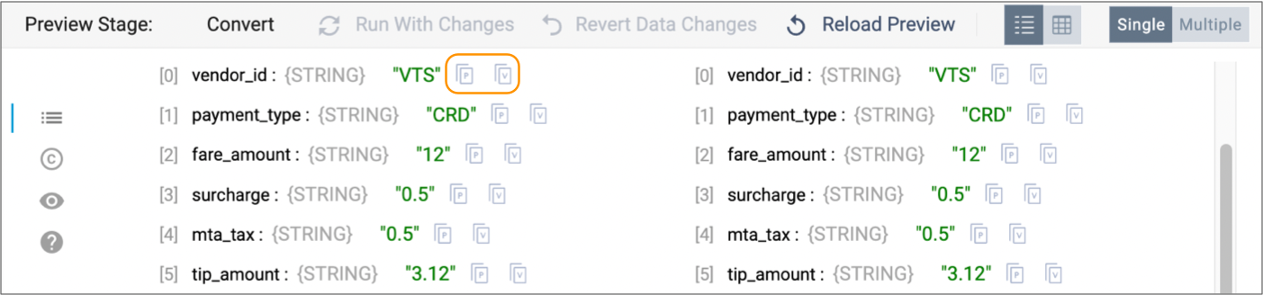

- Preview

- Preview includes the following enhancements:

- Keyboard shortcut - You can use Command+Return on Mac and Ctrl+Enter on Windows machines to start previewing pipelines and fragments. Control Hub uses the existing preview configuration for the preview.

- Table view - When you configure preview to display field types, the field type now shows in Table view next to the field name, as it does in list view.

- Environments

- When you view environment details in the Environments view, you can view the list of deployments that belong to the environment. To view details about a specific deployment, click the deployment name.

- Deployments

- Deployments include the following enhancements:

- All deployment types - When you view deployment details in the Deployments view, you can click the name of the parent environment to navigate to the environment details.

- Kubernetes deployments - You now configure advanced mode for a Kubernetes deployment in the main deployment wizard rather than in a separate dialog box.

- Scheduled tasks

- When creating a scheduled task that runs on a minute basis, you can specify which minute to start the job at. For example, if you create a scheduled task that runs every 15 minutes, you can schedule the task to start at 3 minutes after every hour, such that the task starts at 2:03, 2:18, 2:33, and 2:48.

- Organization default system limits

- StreamSets has added a default system limit for the number groups that can exist in an organization. The limit is 1,000 groups.

Behavior Change

- Pipeline and fragment version history

-

With this release, StreamSets has simplified pipeline and fragment version history. You can now access the version history of a pipeline or fragment from the pipeline canvas only.

Previously, you could access different views of the version history - one from the pipeline canvas and another from the Pipelines or Fragments view.

Fixed Issues

- When users without the Organization Administrator role try to share an object that they have read access to, the Sharing Settings dialog box displays empty user and group names.

- While editing a connection with an engine of a more recent version than the one the connection was created with, the wizard never moves to the second step for some connection types.

- When a self-managed deployment defines a percentage JVM memory strategy for

engines, the following Docker engine installation types on Linux with cgroup v2

enabled incorrectly allocate a percentage of the available memory on the host

machine as the Java heap size, instead of the available memory in the Docker

container:

- Data Collector

- Transformer prebuilt with Scala 2.11

November 1, 2023

- When trying to join an existing StreamSets organization using a Microsoft corporate email account, users are guided to create a new organization, instead.

October 2023

The following StreamSets platform releases occurred in October 2023.

October 27, 2023

This release includes several enhancements and fixed issues.

Enhancements

- Search

- When performing a basic string search, you can select the operator does not contain to find objects that do not contain a specified string. For example, the following basic search condition finds all pipelines that do not contain the string ADLS2 in the pipeline name:

- Monitoring jobs

- When viewing the engine log for an active job, you can select another engine that you want to view the log for.

- Editing environments and deployments

- When you edit an existing environment or deployment, you can easily scroll to the configuration properties that you want to change.

- Kubernetes deployments

- When using advanced mode to configure a Kubernetes deployment, you can download the YAML file, edit the file in a text editor, and then upload the edited file.

Fixed Issues

- If you use bulk edit mode to define runtime parameters for a job instance or job template and change the value of a runtime parameter to a number, you cannot edit the job instance or job template.

- Control Hub incorrectly retrieves the latest offset for a single instance job, causing an outdated or incorrect offset to display.

- Sorting scheduled tasks by the Next Execution Time property does not change the order in which the tasks are displayed.

- When you change the owner of a pipeline, the new owner is not granted permission on the pipeline.

October 6, 2023

This release includes the following fixes:

- When editing a scheduled task, the task incorrectly changes from daily to hourly and vice versa.

- A warning message displays when you change the default value of the Column Separator, Quote Character, or Escape Character property for a pipeline stage and new values are not set.

September 2023

The following StreamSets platform releases occurred in September 2023.

September 29, 2023

- Fields that previously held multiple lines of code, but were intended to have a single line, do not display the code.

September 27, 2023

This release includes several enhancements, fixed issues, and a behavior change.

Enhancements

- Expression language display

- StreamSets expressions display strings and the dollar sign and curly brackets that surround the expression in red and purple, respectively, as follows:

- Code editors

- Code editors allow entering a block of code, as for a JavaScript

Evaluator processor. Code editors include the following enhancements:

- When available, you can use a right arrow icon to collapse a section, and the down arrow icon to expand the section again.

- To toggle a code editor to full screen and back, use F11 or Ctrl+B on Windows or Command+B on Mac. You can no longer use the Esc key to toggle the display.

- On a properties page that includes a code editor, the

Tab key moves the focus to the next property.

As a result, you can no longer use the Tab key to add indents within the code editor.

To add or reduce indents in the code editor, use the Ctrl key with square brackets ( ] [ ) on Windows or the Command key with square brackets ( ] [ ) on Mac.

- Expression completion

- Usability enhancements to expression completion include the following

notable updates:

- Expression completion displays new icons for element types, as

follows:

- Square icon ( □ ) for fields

cicon for constantsficon for functionsxicon for runtime parameters

- Expression completion displays the results of all element names

that contain the specified characters. Previously, it only

displayed element names that started with the characters.Note: With this change, you can no longer use the Tab key to accept expression completion suggestions. Instead, use the Return/Enter key, or select the suggestion.

- Expression completion displays new icons for element types, as

follows:

- Connection Details page

-

The Connection Details page has been enhanced to display all non-sensitive connection details, such as the authentication type or resource URL.

- Default initial offset

- You can upload a default initial offset for a job where the pipeline has previously run and pipeline failover is disabled for the job.

- Job template details

-

When you select a job template to use when creating a job, the list of job templates displays with additional details and filters to enable choosing the correct template more easily.

- Quick Start pipelines

- Pipelines that you create using the Quick Start button are named

Quick Start Pipeline <timestamp>by default. You can update pipeline names as needed. - Webhook subscription content type

- When configuring a webhook subscription, if you do not specify a content

type for the webhook, Control Hub uses

text/plainas the content type.

Behavior Change

- Balancing and synchronizing jobs

- When you balance or synchronize a Data Collector job, Control Hub now temporarily stops the job to ensure that the last-saved offset from each pipeline instance is maintained. Control Hub then reassigns the pipeline instances to Data Collectors and restarts the job.

Fixed Issues

- Control Hub allows deleting a shared job associated with a scheduled task, resulting in a scheduled task that attempts to trigger actions on a job that no longer exists.

- If a StreamSets Kubernetes agent loses network connectivity for an extended time,

then recovers, Control Hub has the agent shut down instead of reconnecting to it.

With this fix, Control Hub reconnects to the agent as long as a different StreamSets Kubernetes agent has not replaced it.

- Metric rules and alerts based on the pipeline or stage memory consumption do not behave as expected. These rules have been removed.

- Write to Another Pipeline was not a valid pipeline error handling option. This option has been removed.

- External libraries, such as JDBC drivers, stored outside of the default location fail to load when Data Collector uses Java 8 with the Java Security Manager enabled.

- When configuring a GCE deployment, the Instance Service Account property displays a maximum of 20 service accounts. If your GCP project includes more than 20 service accounts, the service account created as a Control Hub environment prerequisite might not display in the list.

August 2023

The following StreamSets platform releases occurred in August 2023.

August 23, 2023

This release includes several enhancements and fixed issues.

Enhancements

- Using runtime parameters in pipelines

- To use runtime parameters to define values for stage and pipeline properties, you can now select from a list of existing parameters or you can create and then use a new parameter.

- Restart engines for an active deployment

- You can restart all engine instances belonging to an active deployment from the Deployments view.

- Mapping jobs in a topology

- When editing a topology and you click , you can search for jobs that you want to map to the topology. You can perform both basic and advanced searches.

- Authoring engine timeout

- An organization administrator can modify the default authoring engine timeout in the organization properties. Individual users can then override the default organization value in their browser settings within the My Account window.

- Organization default system limits

- StreamSets has increased the default system limits for the following types of objects:

- Jobs - Increased from 500 to 10,000. The system limit for active jobs that can run concurrently is 1,000.

- Scheduled tasks - Increased from 100 to 5,000.

Fixed Issues

- Pipeline preview incorrectly showed multiple spaces in record values as a single space.

- When using the Stream Selector processor, connecting the last stream when there were more than 4 streams required clicking on the very edge of the icon.

- The Job Templates, Job Instances, and Draft Runs views display duplicate preset searches.

- When a pipeline is using an inaccessible engine and then you switch to an accessible engine, Control Hub incorrectly indicates that the newly selected engine is not accessible until you refresh the browser.

- When you edit a job using the context menu on the right side of the list of jobs, the Number of Instances property is always set to 0.

- When you update an external resource archive to remove a file and then restart all engine instances in the deployment, Control Hub copies the updated archive file contents to the engine instances without first removing the deleted file. As a result, the file deleted from the archive still exists in the engine instances.

August 18, 2023

This release includes a behavior change.

Behavior Change

- Update firewall outbound allowlists

- The StreamSets authentication service, https://cloud.login.streamsets.com, is now hosted in another cloud service provider.

July 2023

The following StreamSets platform releases occurred in July 2023.

July 28, 2023

This release includes a behavior change.

Behavior Change

- Update firewall outbound allowlists

- In an upcoming release, the StreamSets authentication service, https://cloud.login.streamsets.com, will be hosted in another cloud service provider.

July 21, 2023

This release includes several enhancements and fixed issues.

Enhancements

- Scheduled tasks

- When you view the list of scheduled tasks, the next scheduled execution time displays for each task.

- External resources

- You can store an external resource archive file in a private Amazon S3 or Google Cloud Storage (GCS) bucket or in a private Azure Blob Storage or Azure Data Lake Storage Gen2 container, as long as the deployment using the archive file is of the same cloud service provider type. For example, if using an Amazon EC2 deployment, you can store the archive file in a private Amazon S3 bucket. You cannot store the archive file in a private GCS bucket.

- Engine resource thresholds

- A deployment defines the maximum thresholds for the following engine resources:

- CPU load

- Memory used

- Number of running pipelines

- Self-managed deployments

- When you stop a self-managed deployment, running engine instances belonging to that deployment are also shut down. After you restart the self-managed deployment, you manually start each engine instance from the command line.

- Google Compute Engine (GCE) deployments

- When you create a GCE deployment, you specify whether to use an automatic or user managed replication policy to store GCP Secret Manager secrets. If you specify a user managed policy, then you also select one or more locations to replicate the secrets to.

Behavior Change

- Review engine threshold settings in automated testing

- With this release, a deployment defines maximum thresholds for the following engine resources: CPU load, memory used, and number of running pipelines. To use different thresholds for an individual engine, you enable an override for the engine. Previously, these thresholds were defined only at an engine level.

Fixed Issues

- When you export a draft pipeline or fragment, Control Hub does not remove plain text credentials configured directly in the pipeline or fragment.

- A Control Hub Kubernetes deployment becomes stuck in an Activating state when you configure

only the

http.nonProxyHostsproperty for the engine proxy properties. - The Create Scheduled Task dialog box displays blank error messages.

- When a deployment defines a percentage JVM memory strategy for engines, a Docker engine installation incorrectly allocates a percentage of the available memory on the host machine as the Java heap size, instead of the available memory in the Docker container.

June 2023

The following StreamSets platform releases occurred in June 2023.

June 14 and 21, 2023

This release includes several enhancements and fixed issues.

The release occurred in two phases across all Control Hub instances in the StreamSets platform. The first phase occurred on June 14, 2023, and the second phase occurred on June 21, 2023.

Enhancements

- Welcome view

- After you log in, Control Hub displays a Welcome view that lists your most recently edited pipelines and jobs so that you can quickly access them.

- Quick Start

- Click Quick Start in the top toolbar to quickly build a Data Collector or Transformer for Snowflake pipeline. A blank pipeline opens in the canvas so that you can immediately begin building the pipeline using all available stages. For a Data Collector pipeline, you must select or deploy an engine before you can preview, run, validate, or check in the pipeline.

- Edit Data Collector pipelines when engine is not accessible

- When using Data Collector version 5.4.0 or later, you can edit pipelines and fragments in the pipeline canvas when the engine is not accessible. The engine must be accessible before you can preview, run, validate, or check in the pipeline or fragment.

- Import pipelines and fragments

- When you import a pipeline or fragment that uses connections, you can choose

how to replace each

connection based on whether the connection exists in the target

organization:

- Connection exists - You can choose to use the existing connection, create a placeholder connection, or remove the connection.

- Connection does not exist - You can choose to create a placeholder connection or remove the connection.

- Job templates

- When you delete a job template that has attached job instances, Control Hub also deletes the attached job instances at the same time.

- Control Hub-managed deployments

- Control Hub-managed deployments include the following enhancements:

- For Amazon EC2, Azure VM, and GCE deployments, the Engine Instances property has been renamed to Desired Instances.

- For Amazon EC2, Azure VM, GCE, and Kubernetes deployments, you can

set the Desired Instances property to 0 to temporarily prevent

engine instances from running, as an alternative to stopping the

deployment.

Previously, the minimum number of instances was 1. You had to stop the deployment to temporarily prevent engine instances from running.

- Groups

- Groups require a unique display name in addition to a unique group ID. Previously, groups required only a unique group ID.

- Alerts

- When the alert text defined for a data SLA or pipeline alert exceeds 255

characters, Control Hub truncates the text to 244 characters and adds a

[TRUNCATED]suffix to the text so that the triggered alert is visible when you click the Alerts icon in the top toolbar.

Fixed Issues

- If you change the values of existing pipeline fragment parameters, publish a new version of the fragment, and then update a pipeline using that fragment to use the latest version, the update incorrectly changes the default values for any existing runtime parameters in the pipeline.

- Fragment export fails when a fragment contains multiple stages of the same type that use different library versions.

- When you select a stage in the canvas while monitoring a running Data Collector or Transformer pipeline, an “Unexpected error occurred” message temporarily displays.

- When configuring a GCE deployment, you are required to select a service account even if the GCP environment has a default service account defined. Similarly, when configuring an Azure VM deployment, you are required to select a managed identity and resource group, even if the Azure environment has defaults defined for those objects.

May 2023

The following StreamSets platform releases occurred in May 2023.

May 12, 2023

This release includes several enhancements and a fixed issue.

Enhancements

- Fragment icons

- By default when you add a published fragment to a pipeline, the fragment

displays as a single stage with a puzzle piece icon:

.

You can modify the

icon to represent the fragment processing logic. For example, if

a fragment merges two streams of data, you might configure the fragment to

use the predefined Merge icon:

.

You can modify the

icon to represent the fragment processing logic. For example, if

a fragment merges two streams of data, you might configure the fragment to

use the predefined Merge icon:  .

. - Pipeline canvas toolbar

- The new pipeline canvas

UI displays the following icons in different colors, making it

easier to locate these icons in the toolbar:

- The Stop and Force Stop icons display in red:

.

. - The Check In icon displays in red (

) when the pipeline or fragment has not

passed implicit validation and cannot be checked in. The Check In

icon displays in green (

) when the pipeline or fragment has not

passed implicit validation and cannot be checked in. The Check In

icon displays in green ( ) when the pipeline or fragment has

passed implicit validation and is ready to be checked in.

) when the pipeline or fragment has

passed implicit validation and is ready to be checked in.

- The Stop and Force Stop icons display in red:

- Fragment export

- You can export and import draft fragments.

- GCE deployments

- You can optionally configure a GCE deployment to provision Google Cloud VM instances without external IP addresses.

Fixed Issue

- If the last page of results for a job search previously returned items, but no longer does, no items are shown on the page. Users must navigate to the previous page to see items.

April 2023

The following StreamSets platform releases occurred in April 2023.

April 23, 2023

This release includes a fixed issue.

Fixed Issue

- When a deployment uses an external resource archive and the

externalResourcesfolder contains an emptyuser-libsfolder, engines belonging to the deployment fail to start with the following error message:Exception in thread "main" java.lang.IllegalArgumentException: Stage libraries directory '/<installation directory>/externalResources/user-libs' does not exist

April 21, 2023

This release includes several enhancements and fixed issues.

Enhancements

- First login to StreamSets

- When you log in to StreamSets for the first time and you do not have access to an engine deployed by another user, a blank Data Collector pipeline opens in the canvas. You can immediately begin building the pipeline using all available stages.

- Pipeline canvas

- When using the new

pipeline canvas UI and you select a link connecting two stages,

Control Hub displays a pop-up menu that includes the following icons:

- Insert Stage (

) - Inserts another stage

between the connected stages.

) - Inserts another stage

between the connected stages. - Delete (

) - Deletes the selected link.

) - Deletes the selected link.

- Insert Stage (

- Job templates

- On the Job Templates view, the Start Jobs menu option has been renamed to Create Instances to more clearly indicate that you are creating and starting job instances from a job template.

Fixed Issues

- Users and engines randomly encounter 401 authorization errors from Control Hub.

- Editing an existing job template fails with the following error:

'len' parameter must be a valid integer equal to -1 or greater than zero. len = '0' : RESTAPI_05 - Searching for a pipeline by ID fails with the following

error:

"RSQL_00:Bean 'RPipeline' property 'id' does not have a mirrored property in 'PPipeline'" - The pipeline canvas incorrectly enables the Draft Run menu even though the selected authoring engine is not accessible.

- When you modify or delete a parameter from a fragment being used in a pipeline, publish the fragment, and then update the pipeline to use the latest fragment version, the parameter changes are not reflected in the pipeline.

- If you use advanced mode to edit the

dnsPolicyattribute in a Control Hub Kubernetes YAML file, your modifications do not take effect. The deployment always uses the Default DNS policy. - If you specify a service account name for a Control Hub Kubernetes deployment, start the deployment, and then edit the active

deployment to delete the service account name, the change does not take effect

and the previous service account remains associated with the Kubernetes deployment.

March 2023

The following StreamSets platform release occurred in March 2023.

March 29, 2023

This release includes several enhancements and fixed issues.

Enhancements

- Search

- Search includes the following enhancements:

- Control Hub includes additional preset searches for fragments, pipelines, job templates, job instances, and draft runs that are starred by default.

- When searching for pipelines or fragments, you can use the Latest Version property to restrict searches to the latest version of each pipeline or fragment.

- Pipeline and fragment preview

- Previewing

pipelines and fragments includes the following enhancements:

- When you preview a pipeline or fragment, Control Hub uses the default preview configuration and no longer displays the

Preview Configuration dialog box. While running preview, you can

click the Preview Configuration icon (

) to change the configuration and then

run the preview again.

) to change the configuration and then

run the preview again. - When you preview a Transformer or Transformer for Snowflake pipeline or fragment, the data displays in table view by

default.

When you preview a Data Collector pipeline or fragment, the data continues to display in list view by default.

- Table view displays only output data by default. You can optionally choose to display both input and output data.

- Table view no longer displays colors for different types of data and changed data. List view continues to display colors.

- While running preview, you can click the

Expand icon (

) to quickly expand the preview panel to

view more preview data.

) to quickly expand the preview panel to

view more preview data.

- When you preview a pipeline or fragment, Control Hub uses the default preview configuration and no longer displays the

Preview Configuration dialog box. While running preview, you can

click the Preview Configuration icon (

- Pipeline validation

- If pipeline validation fails due to a timeout error, you can increase the validation timeout value.

- Job templates

- When you create job instances from a job template, you can specify whether the job instances inherit the permissions assigned to the parent job template.

- Pipeline export

- You can export and import draft pipelines.

- Customize table columns

- You can resize, hide, and reorder the table columns displayed in all views.

- Azure VM deployments

- The tracking URL for an active Azure VM deployment opens the overview page of the Azure deployment created for your StreamSets deployment. The deployment overview page enables you to more quickly access information about the Azure resources provisioned for the StreamSets deployment.

Fixed Issues

- Basic searches that use the

not includesoperator or advanced searches that use the=out=operator incorrectly return results that do include the specified values. - If you view the run history of a job and the pipeline version for one of the runs has been deleted, then the run history displays an error.

- When a pipeline fails over to another Data Collector, the input and output records that display in the Summary tab and in the run summary for a selected job run accessed from the History tab do not include the records for all Data Collectors.

- Control Hub allows you to set the CPU Threshold Percentage for a Control Hub Kubernetes deployment to a value between 0 and 100, but 0 is not a valid value in the Kubernetes cluster.

February 2023

The following StreamSets platform releases occurred in February 2023.

February 10, 2023

This release includes several new features, enhancements, behavior changes, and a fixed issue.

New Features and Enhancements

- Kubernetes integration

-

Control Hub provides an integration with Kubernetes. When you use Control Hub Kubernetes environments and deployments, you launch a StreamSets Kubernetes agent that runs in your Kubernetes cluster. The agent communicates with Control Hub to provision the Kubernetes resources needed to run StreamSets engines and to deploy engine instances to those resources.

- Search

- Search

is no longer a Technology Preview functionality and is now enabled by

default. Search replaces filtering and is implemented for the following

object types:

- Fragments

- Pipelines

- Job templates

- Job instances

- Draft runs

- Pipeline canvas

- By default, Control Hub now displays the new pipeline canvas UI.

- Customize table columns

- You can resize, hide, and reorder the table columns displayed in the Job Templates, Job Instances, and Draft Runs views.

Behavior Changes

- Saved searches

- With this release, Control Hub stores your saved searches in the backend. Previously, Control Hub stored saved searches in your current browser. Saved searches did not apply if you logged in using another browser, and saved searches were removed if you cleared the browser cache.

- Job instances with no job runs

- With this release, Control Hub automatically deletes inactive job instances older than 365 days that have never been run.

Fixed Issue

- When a pipeline fails over to another Data Collector, the input and output records that display in the job history do not include the records for the original Data Collector.

February 7, 2023

This release includes a fixed issue.

Fixed Issue

- When SCIM provisioning is enabled and Microsoft Entra ID synchronizes users with expired Control Hub invitations, these users cannot log into Control Hub, even though the users have been correctly provisioned.

January 2023

The following StreamSets platform release occurred in January 2023.

January 20, 2023

This release includes several enhancements and fixed issues.

Enhancements

- View and edit connection details from a published pipeline

- When viewing a published pipeline that includes a stage using a connection, you can click the Edit Connection icon in the stage properties to view and edit the connection details.

- Customize table columns

- You can resize, hide, and reorder the table columns displayed in the Connections view.

- Job run history for job instances started from a job template

- Control Hub now includes job instances started from a job template in the total count of job runs for the organization. As a result, Control Hub purges the run history for job instances started from a job template in the same way that it purges the run history for job and draft runs.

- Search

- The Technology Preview search functionality includes the following

enhancements:

- You can search for attached job instances created from job templates.

- You can search for job instances that include a pipeline with a specified pipeline status.

- You can search for job templates that have been archived.

- In basic search, you can use auto-completion for the following search properties:

Labelproperty for pipelines and fragmentsTagandEngine Labelproperties for job instances and job templates

As you begin typing a value to search for, Control Hub displays a drop-down menu that lists values that match the entered characters.

Fixed Issues

- Preview does not work when the Run Preview Through Stage property is set to a fragment.

- The Engines view does not correctly sort the list of engines by the Memory Used column.

- When using the new pipeline canvas UI while monitoring a job, the title of the job incorrectly displays the pipeline name instead of the job name.

- If you create and publish a pipeline fragment using pipeline stages and you quickly enter a fragment prefix in the last step of the wizard, the newly created fragment is not added to the pipeline.

- When using the Technology Preview search functionality and you sort pipeline search results by the Last Modified By column, the pipelines are incorrectly sorted by name.

2022

The following StreamSets platform releases occurred in 2022.

December 2022

The following StreamSets platform release occurred in December 2022.

December 16, 2022

This release includes several enhancements and fixed issues.

Enhancements

- Pipeline fragments

- When you create a pipeline fragment using pipeline stages and you choose to publish the new fragment, Control Hub displays the original pipeline in the canvas, automatically replacing the individual stages with the newly published fragment.

- Draft runs

- While monitoring an engine in the Engines view, you can stop all draft runs currently running on that engine.

- Search

- The Technology Preview search functionality includes the following

enhancements:

- You can perform both basic and advanced searches to find specific draft runs.

- You can search for job instances by the job status color.

- Subscriptions

- You can configure a webhook action for a subscription to use one of the

following additional authentication types to connect to the receiving system:

- API Key

- Bearer Token

- OAuth 2.0

Fixed Issues

- The Control Hub REST API incorrectly returns an HTTP 400 status code instead of a 404 status code when the specified environment or deployment does not exist.

- When using the Technology Preview search functionality to find job templates or job instances last modified by a user, the search results incorrectly display the job created by that user.

- When the Legacy Kubernetes integration is enabled, users cannot create a pipeline when they are assigned the Deployment Manager role but not the Provisioning Operator role or the Organization Administrator role.

November 2022

The following StreamSets platform release occurred in November 2022.

November 18, 2022

This release includes several enhancements and fixed issues.

Enhancements

- Deployments

- In the Deployments view, you can filter the list of displayed deployments by engine type.

- Pipeline design

- When configuring the conditions for a Stream Selector processor in a Data Collector pipeline, you can reorder the output conditions.

- Search

- The Technology Preview search

functionality includes the following enhancements:

- You can search by user email address to find all pipelines or fragments committed or last modified by the user or to find all job templates or job instances created or last modified by the user.

- When using basic mode to search for job templates or job instances,

you can include or exclude the

vprefix. For example, you can search for bothv1or1when defining the Pipeline Version property.Previously in basic mode, you had to include the prefix. For example, you had to search for

v1.

Fixed Issues

- When you expand or collapse a fragment in the job monitoring UI, Control Hub does not update the metric diagrams to display statistics for individual stages in the expanded fragment or for the single fragment stage.

- When the Enable WebSocket Tunneling for UI Communication property is enabled for an organization, all users should be able to configure the Browser to Engine Communication type; however, only organization administrators can do so.

- When using the Technology Preview search functionality, searching for job instances with an INACTIVE job status excludes job instances that have never run.

- When using the Technology Preview search functionality and you sort the search results by job instance status, job instances that have never run are shown first when sorting by ascending status, and last when sorting by descending status.

- When you add a fragment that uses parameters to a pipeline and define no parameter name prefix, Control Hub silently fails to add the fragment to the pipeline.

- When you configure an Azure VM deployment, Control Hub does not display existing SSH key pairs in the Key Pair Name property because

the documentation to create an Azure AD custom role for the parent environment

does not include the following required permission:

Microsoft.Compute/sshPublicKeys/readImportant: StreamSets strongly recommends updating the custom role for all existing Azure environments, as described in the Azure environment prerequisites.

October 2022

The following StreamSets platform releases occurred in October 2022.

October 28, 2022

This release includes a new feature.

New Feature

- Provisioning of user accounts from Microsoft Entra ID

- After enabling SAML authentication using Microsoft Entra ID (previously known as Azure AD), you can configure the provisioning of user accounts from Entra ID to StreamSets. When a user or group is created, updated, or deleted in Entra ID, the same changes are automatically made in StreamSets.

October 26, 2022

This release includes several enhancements and fixed issues.

Enhancements

- Supported browsers

- StreamSets Control Hub now supports the latest version of Microsoft Edge as a browser.

- Search

- The Technology Preview search functionality includes the following

enhancements:

- For job instance and job template search, the Pipeline Commit Label property has been renamed to Pipeline Version to more clearly indicate that the property specifies the version of the pipeline included in a job instance or job template.

- Basic search for job templates no longer displays the Status property because job templates do not have a status.

- Proxy server configuration for engines

-

When you set up a deployment that configures a Data Collector or Transformer engine to use a proxy server for outbound network requests, you can now include special characters in the proxy user and password values, except for an exclamation point (

!), a backward slash (\), a leading number sign (#), or a leading or trailing space.

Fixed Issues

- The Test Connection button in the connection wizard incorrectly requires a user to have the Deployment Manager role.

- Creating a pipeline incorrectly requires a user to have the Deployment Manager role.

- The Fields to Convert property in the Field Type Converter processor does not allow an expression that includes a comma.

- If you configure an engine to use a proxy server and you include a space, single quotation mark, or double quotation mark in the defined proxy properties, then either the engine installation script fails or the proxy authentication fails.

- Topology data SLAs do not trigger alerts because they do not correctly retrieve data.

October 12, 2022

This release includes a fixed issue.

Fixed Issue

- When you delete multiple users at the same time, a user synchronization error message displays even though the users are successfully deleted.

October 6, 2022

This release includes a fixed issue.

Fixed Issue

- When SAML authentication is enabled, only users with the Organization Administrator role can log in.

October 5, 2022

This release includes new features, enhancements, a behavior change, and fixed issues.

New Features and Enhancements

- Search

- You can perform both basic and advanced searches to find specific pipelines, fragments, job instances, or job templates. With basic search, you define search conditions by selecting the object properties, operators, and values that you want to search for.

- Copy field value in preview

- You can quickly copy a field value from pipeline preview using the Copy

Field Value to Clipboard icon:

.

. - Pipeline version history

- When using the new pipeline canvas UI, the pipeline or fragment version history that opens from the canvas displays all information on the initial panel. You do not need to expand the version history to manage the versions including viewing commit messages, comparing versions, creating tags for versions, and deleting versions.

- Import pipelines and fragments without connections

- When you import pipelines or fragments that use connections and those connections do not exist in the target organization, you can choose to import the objects without connections. After the import, you must edit the pipelines or fragments to define the connections.

- Subscription parameters

- You can use the ERROR_MESSAGE parameter for a subscription triggered by a maximum global failover retries exhausted event.

- Engine resource thresholds

- The default value of the Max Memory threshold for an engine is now 100%. Previously, the default value was 80%. Existing engines retain the currently configured threshold.

Behavior Change

With this release, the sample IAM policy for credentials provided by a Control Hub AWS environment includes the following additional permission:

autoscaling:DescribeWarmPool

This permission is required when you update the number of engine instances for an active Amazon EC2 deployment belonging to an AWS environment.

If the IAM policy does not include this permission and you update the number of engine instances, the deployment transitions to an Activation Error state. When you access the tracking URL to the AWS Management Console, the Events tab displays the following error:

API: autoscaling:DescribeWarmPool User: arn:aws:sts::${ACCOUNT_ID}:assumed-role/${CROSS_ACCOUNT_ROLE}/STREAMSETS_SCH is not authorized to perform: autoscaling:DescribeWarmPool because no identity-based policy allows the autoscaling:DescribeWarmPool action

StreamSets strongly recommends updating the IAM policy for credentials for all existing StreamSets AWS environments to avoid this deployment error.

...

{

"Sid": "0",

"Effect": "Allow",

"Action": [

"ec2:DescribeImages",

"autoscaling:DescribeScalingActivities",

"ec2:DescribeVpcs",

"autoscaling:DescribeAutoScalingGroups",

"ec2:DescribeRegions",

"autoscaling:DescribeLaunchConfigurations",

"ec2:DescribeInstanceTypes",

"ec2:DescribeInstanceTypeOfferings",

"ec2:DescribeSubnets",

"ec2:DescribeKeyPairs",

"ec2:DescribeSecurityGroups",

"ec2:DescribeInstances",

"autoscaling:DescribeScheduledActions”,

"autoscaling:DescribeWarmPool"

],

"Resource": "*"

},

...You do not need to restart active StreamSets AWS environments or Amazon EC2 deployments after updating the policy. However, if an existing Amazon EC2 deployment has transitioned to an Activation Error state due to this error, then you must stop that deployment and start it again.

For more information about the required IAM policy, see Configure AWS Credentials.

Fixed Issues

- The Topologies Dashboard can display an incorrect number of engines.

- Pipelines that contain fragments with names starting with a number do not correctly display in the new pipeline canvas UI and encounter unexpected errors in the classic pipeline UI.

- Stages that were originally in a fragment could become uneditable.

August 2022

The following StreamSets platform release occurred in August 2022.

August 26, 2022

This release includes several enhancements, a behavior change, and fixed issues.

Enhancements

- Scroll zoom in pipeline canvas

- You can now zoom in or out of the pipeline canvas using the mouse scroll wheel or using the trackpad. By default, scroll zoom is enabled. You can disable scroll zoom if needed.

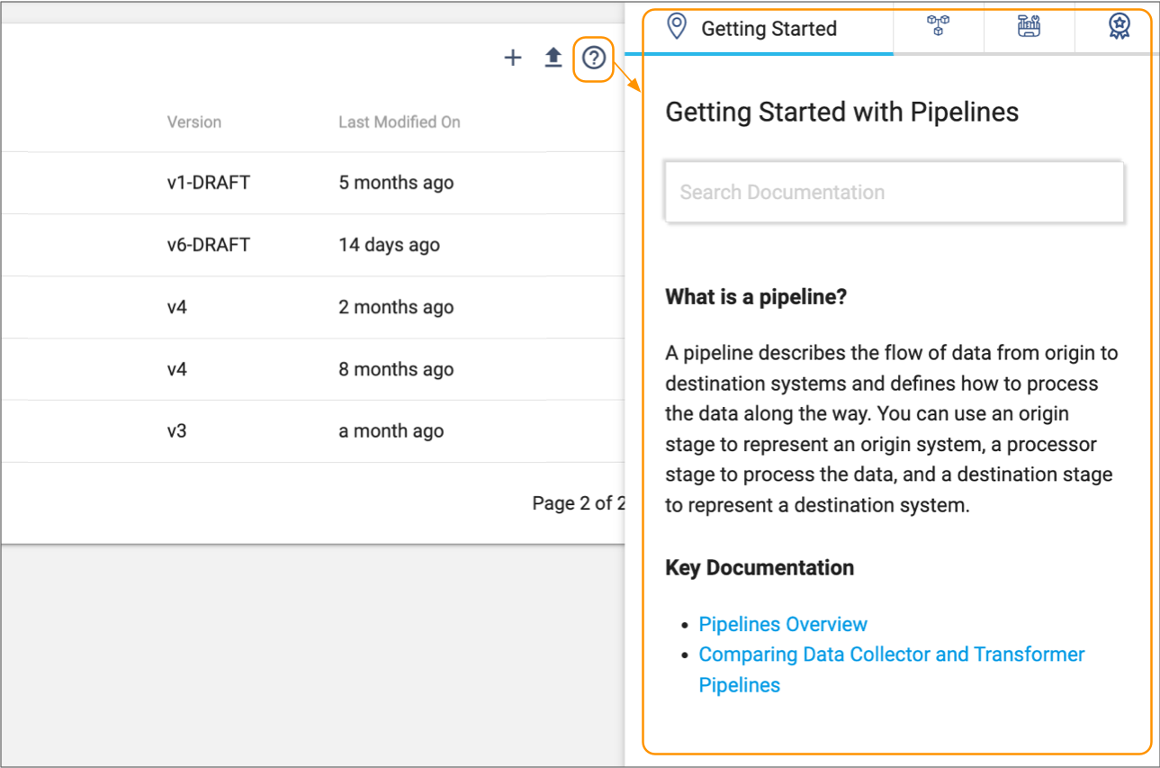

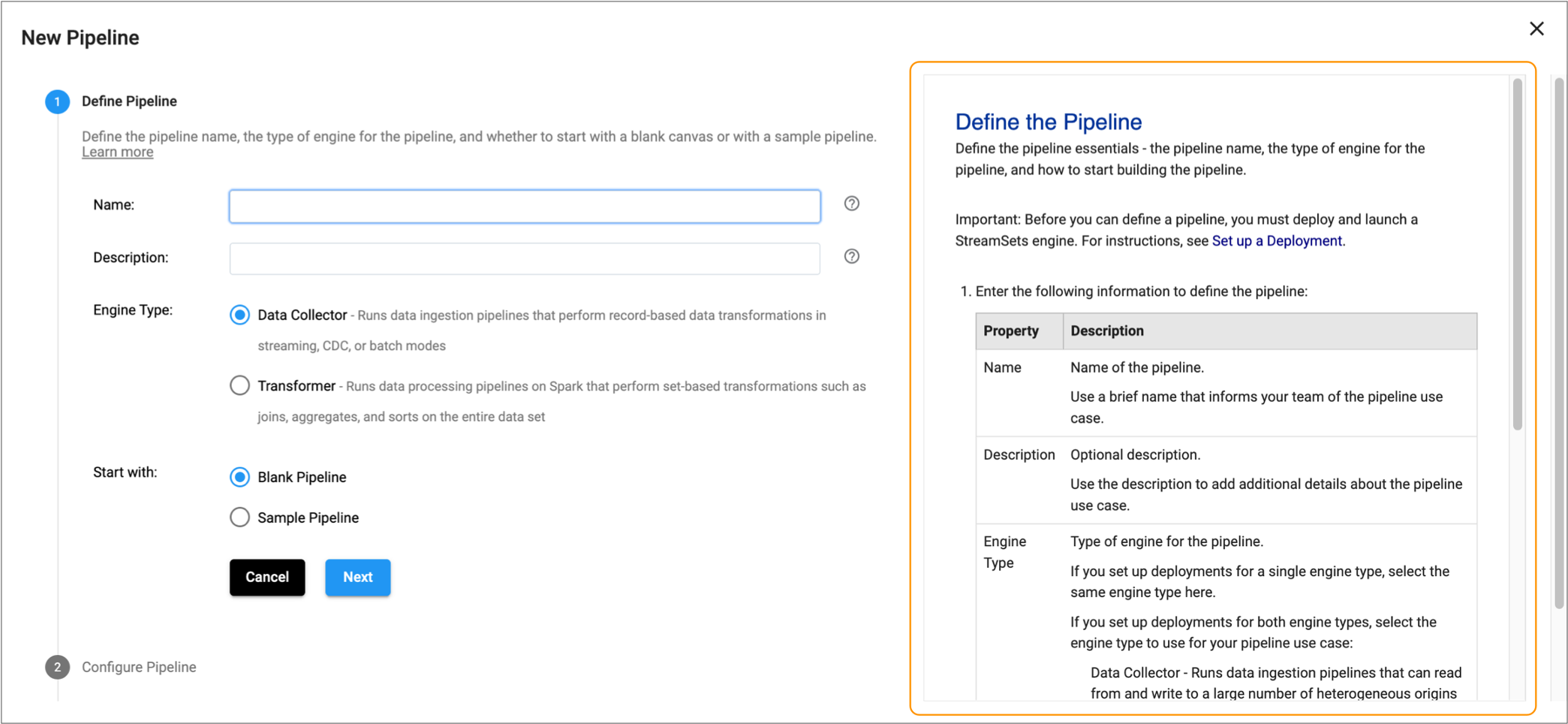

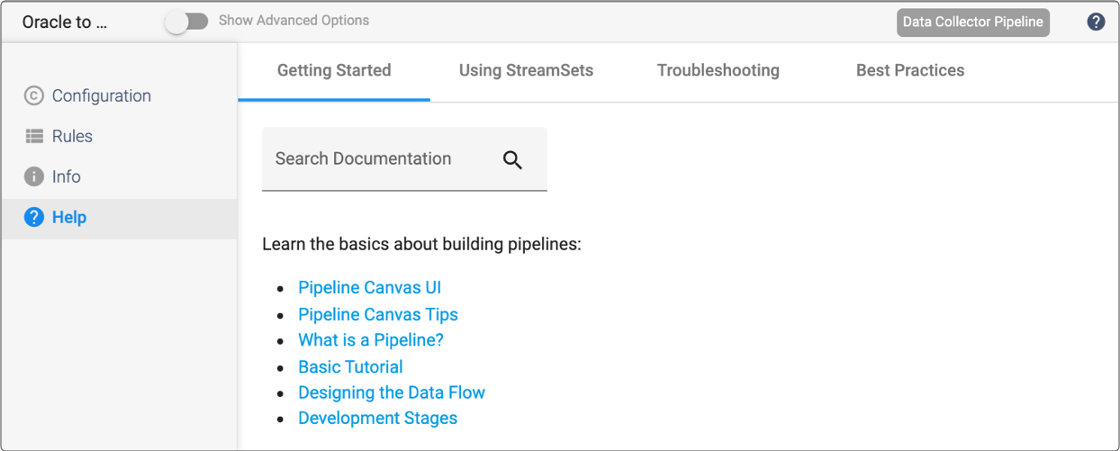

- Hide or show contextual help in wizards

- When you create or edit an object, such as an environment, deployment, pipeline, or job, you can now hide or show the contextual help that displays on the right of the wizard.

Behavior Change

- Filters for job templates, job instances, or draft runs

- With this release, when you select the Keep Filter Persistent checkbox to retain the filter on the Job Templates, Job Instances, or Draft Runs view, Control Hub retains a unique filter for each view. Previously, Control Hub incorrectly used the same filter for all of these views.

Fixed Issues

- In rare cases when editing a deployment, the External Resource Source property can be set to null which causes a null pointer exception.

- The TRIGGERED_COUNT and TRIGGERED_ON subscription parameters do not contain the correct values.

- The Windowing Aggregator processor does not display aggregation charts.

- You can delete a job associated with a running scheduled task, resulting in a scheduled task that attempts to trigger actions on a job that doesn’t exist.

- In rare cases, the configured time zone for a scheduled task is ignored, which causes the task to start and finish at undesired times.

- Clicking Upload Offset & Start while viewing job instance details uploads the offset but doesn’t start the job.

- Because the initialization script for an Azure VM deployment does not run before engine instances start, you cannot use the script to update DNS entries. As a result, engines fail to launch when the Azure VNet uses custom DNS servers.

July 2022

The following StreamSets platform release occurred in July 2022.

July 20, 2022

This release includes several enhancements and fixed issues.

Enhancements

- Runtime parameters for pipelines

- Some pipeline and stage properties conditionally display child properties. For example, if you configure an origin to use the Delimited data format, the origin displays a set of Delimited configuration properties. If you configure that origin to use the JSON data format, it displays a different set of JSON configuration properties.

- Job monitoring toolbar

- When you monitor a job, the canvas includes a new toolbar that more clearly indicates the action that each toolbar icon completes.

- Starting multiple scheduled jobs at the same time

- When you start multiple jobs at the exact same time using the scheduler, the number of pipelines running on an engine can exceed the Max Running Pipeline Count configured for the engine.

Fixed Issues

- When sharing an object, you cannot search for user and group names that include the search string, only names that start with the search string.

- If you rename a job, scheduled tasks for that job do not update the job name.

- When you delete users or groups and then create users or groups with the same ID, permissions might behave unexpectedly.

June 2022

The following StreamSets platform releases occurred in June 2022.

June 17, 2022

This release includes an enhancement and several fixed issues.

Enhancement

- New stages centered in the canvas

- When you use the stage library panel to add a new stage to the pipeline canvas, the stage appears in the center of the current view of the canvas. Previously, it appeared to the right of the right-most stage in the pipeline.

Fixed Issues

- When a deployment contains multiple engine instances and you try to share the deployment with another user or group for a second time, Control Hub fails to update the permissions due to a 500 internal server error.

- A single faulty subscription prevents other suitable subscriptions from triggering.

- You cannot access a draft run of a pipeline when you view the list of running pipelines on an engine from the Engines view.

June 3, 2022

This release includes an enhancement and several fixed issues.

Enhancement

- View and edit connection details from stage properties

- When configuring a pipeline or fragment stage that uses a connection, you can click the Edit Connection icon in the stage properties to view and edit the connection details.

Fixed Issues

- The Available Pipeline Runners histogram does not display when you monitor a job for a Data Collector multithreaded pipeline.

- The pipeline canvas cannot handle more than 100 instances of the same stage.

- Provisioning Agents are incorrectly included in the system limit for engines.

May 2022

The following StreamSets platform releases occurred in May 2022.

May 20, 2022

This release includes several enhancements and fixed issues.

Enhancements

- Compare pipeline and fragment versions

- When using the new pipeline

canvas UI to compare two versions of a pipeline or pipeline

fragment, you can click the Open in Canvas icon (

) to open one of the versions in the pipeline

canvas.

) to open one of the versions in the pipeline

canvas. - Engine type icon in Fragments, Pipelines, and Sample Pipelines views

- The Fragments view, Pipelines view, and Sample Pipelines view include an engine type icon next to each pipeline or fragment name so that you can quickly determine the pipeline or fragment type.

Fixed Issues

- Credential fields in a pipeline state notification webhook do not properly show the value of entered passwords when authentication is used.

- On Windows 10, some file archive programs cannot extract an exported Control Hub ZIP file because of the colon (:) character in the JSON file names.

May 6, 2022

This release includes several new features, enhancements, and fixed issues.

New Features and Enhancements

- Draft runs

- Pipeline test runs have been renamed to draft runs to more clearly indicate that a draft run is the execution of a draft pipeline.

- Stage selector in the pipeline canvas

- The new pipeline canvas UI includes an enhanced stage selector with a horizontal layout, making it easier to filter the stages by type.

Fixed Issues

- When authorizing Control Hub API calls, Control Hub does not consider the roles assigned by a user’s group.

- When you change the owner of a scheduled task, the previous owner is incorrectly listed as the user that executes the task.

April 2022

The following StreamSets platform releases occurred in April 2022.

April 27, 2022

This release includes a fixed issue.

Fixed Issue

- You cannot create new users using the Control Hub REST API.

April 22, 2022

This release includes several new features and enhancements.

New Features and Enhancements

- Init scripts for cloud service provider deployments

- You can define an initialization script for cloud service provider deployments, such as Amazon EC2, Azure VM, and GCE deployments. Control Hub runs the init script while provisioning a new instance in your cloud account.

- Pipeline canvas toolbar

- The pipeline canvas includes a new toolbar that more clearly indicates the action that each toolbar icon completes. By default, the pipeline canvas continues to display the classic toolbar.

- Job status

- Jobs that have not run are listed with an Inactive status. Previously, jobs that had not run were listed without a status.

- Editable group ID

- When you create a group, you define the group display name and can optionally edit the group ID. By default, Control Hub generates the group ID from the display name, using all lowercase characters and replacing any spaces with underscores. Users type the group ID when using credential functions in pipelines. As a result, you might want to edit the default group ID to make it easier to use with credential functions.

April 13, 2022

This release includes a fixed issue.

Fixed Issue

- You cannot directly log in to the SAML configuration page as an Organization Administrator if you belong to another organization with SAML authentication disabled.