JSON Parser

The JSON Parser processor parses a JSON object embedded in a string field and passes the parsed data to a map field in the output record.

When you configure the JSON Parser processor, you specify the field that contains the JSON object. You determine whether the processor replaces the data in the original field with the parsed data or passes the parsed data to another field. You also configure whether the processor stops the pipeline with an error or continues processing when the specified JSON field does not exist in a record.

You determine the schema that the processor uses to read the JSON data. The processor can infer the schema from the first record that is read, or you can define a custom schema to use in JSON or DDL format.

Schema

The JSON Parser processor requires a schema to parse the JSON object. You determine the schema that the processor uses in the following ways:

- Schema Inference

- By default, the JSON Parser processor infers the schema from the first incoming

record. The JSON object embedded in the specified field in the first record must

include all fields in the schema.Note: To infer the schema, the processor requires that Apache Spark version 2.4.0 or later is installed on the Transformer machine and on each node in the cluster.

- Custom Schema

- Use a custom schema when the JSON object in the first record does not include all fields in the schema or when the processor infers the schema inaccurately.

JSON Schema Format

To use JSON to define a custom schema, specify the field names and data types within a root field that uses the Struct data type.

nullable attribute is required for most fields.{

"type": "struct",

"fields": [

{

"name": "<first field name>",

"type": "<data type>",

"nullable": <true|false>

},

{

"name": "<second field name>",

"type": "<data type>",

"nullable": <true|false>

}

]

}{

"name": "<list field name>",

"type": {

"type": "array",

"elementType": "<subfield data type>",

"containsNull": <true|false>

}

}{

"name": "<map field name>",

"type": {

"type": "struct",

"fields": [ {

"name": "<first subfield name>",

"type": "<data type>",

"nullable": <true|false>

}, {

"name": "<second subfield name>",

"type": "<data type>",

"nullable": <true|false>

} ] },

"nullable": <true|false>

}Example

{

"type": "struct",

"fields": [

{

"name": "TransactionID",

"type": "string",

"nullable": false

},

{

"name": "Verified",

"type": "boolean",

"nullable":false

},

{

"name": "User",

"type": {

"type": "struct",

"fields": [ {

"name": "ID",

"type": "long",

"nullable": true

}, {

"name": "Name",

"type": "string",

"nullable": true

} ] },

"nullable": true

},

{

"name": "Items",

"type": {

"type": "array",

"elementType": "string",

"containsNull": true},

"nullable":true

}

]

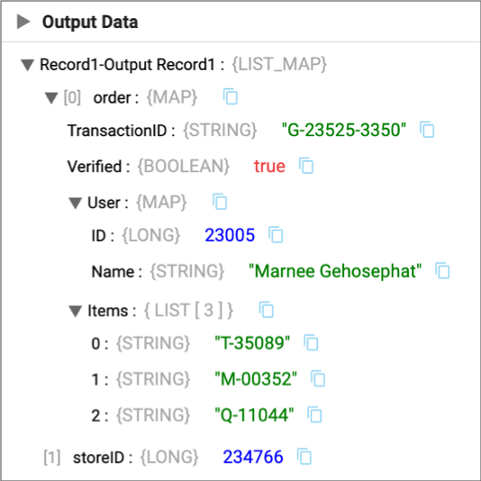

}order

field:{"Verified":true, "Items":["T-35089", "M-00352", "Q-11044"], "TransactionID":"G-23525-3350", "User":{"ID":23005,"Name":"Marnee Gehosephat"}}The processor generates the following record when configured to replace the

order field with the parsed data:

Notice the User Map field with the Long and String subfields and the

Items List field with String subfields. In addition, the order

of the fields now matches the order in the custom schema. Also note that any

remaining fields in the record are passed to the output record unchanged, the

storeID field in this example.

DDL Schema Format

<first field name> <data type>, <second field name> <data type>, <third field name> <data type><list field name> Array <subfields data type><map field name> Struct < <first subfield name>:<data type>, <second subfield name>:<data type> >`count`.Example

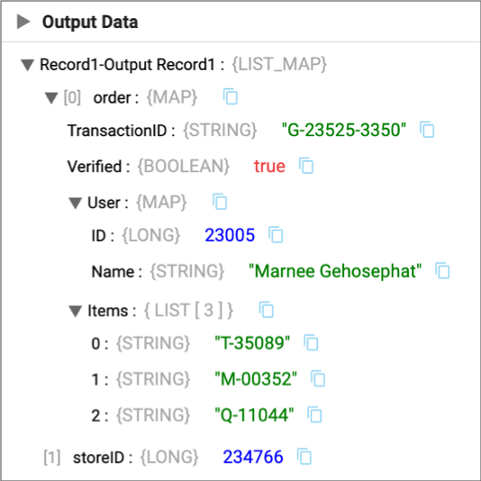

TransactionID String, Verified Boolean, User Struct <ID:Integer, Name:String>, Items Array <String>When processing the following JSON object embedded in an order

field:

{"Verified":true,"User":{"ID":23005,"Name":"Marnee Gehosephat"},"Items":["T-35089", "M-00352", "Q-11044"],"TransactionID":"G-23525-3350"}The processor generates the following record when configured to replace the

order field with the parsed data:

Notice the User Map field with the Long and String subfields and the

Items List field with String subfields. In addition, the order

of the fields now matches the order in the custom schema. Also note that any

remaining fields in the record are passed to the output record unchanged, the

storeID field in this example.

Schema Error Handling

The JSON Parser processor handles schema errors the same way, whether the schema is inferred from the first incoming record or defined in a custom schema.

When the JSON schema is not valid, the processor stops the pipeline with an error.

When the specified field to parse does not include valid JSON data, the processor passes all fields defined in the schema to the output record with null values.

- Includes a field not defined in the schema

- The processor ignores the field, dropping it from the output record.

- Missing a field defined in the schema

- The processor passes the field to the output record with a null value.

- Includes data in a field not compatible with the data type defined in the schema

- The processor handles the error based on the Error Handling mode that you

define on the Schema tab:

- Permissive - The processor passes the field with a null value to the output record and continues processing.

- Fail Fast - The processor stops the pipeline with an error.

Note: The Error Handling property requires that Apache Spark version 3.0.0 or later is installed on the Transformer machine and on each node in the cluster. For earlier Spark versions, when a field is not compatible with the data type defined in the schema, the processor ignores the field, dropping it from the output record.

Configuring a JSON Parser Processor

Configure a JSON Parser processor to parse a JSON object embedded in a string field.

-

In the Properties panel, on the General tab, configure the

following properties:

General Property Description Name Stage name. Description Optional description. Cache Data Caches data processed for a batch so the data can be reused for multiple downstream stages. Use to improve performance when the stage passes data to multiple stages. Caching can limit pushdown optimization when the pipeline runs in ludicrous mode.

-

On the JSON Parser tab, configure the following

properties:

JSON Parser Property Description JSON Field Field that contains the JSON object. Replace Field Replaces the original data in the field containing the JSON object with the parsed data. Output Field Output field for the parsed JSON object. You can specify an existing field or a new field. If the field does not exist, the JSON Parser processor creates the field.

Available when Replace Field is cleared.

Fail if Missing Stops the pipeline with an error if the specified JSON field does not exist in a record. When cleared, the processor passes the record to the next stage and then continues processing.

-

On the Schema tab, configure the following

properties:

Schema Property Description Schema Mode Mode that determines the schema to use when processing data: - Infer from Data

The processor infers the field names and data types from the data.

- Use Custom Schema - JSON Format

The processor uses a custom schema defined in the JSON format.

- Use Custom Schema - DDL Format

The processor uses a custom schema defined in the DDL format.

Note: To infer the schema, the processor requires that Apache Spark version 2.4.0 or later is installed on the Transformer machine and on each node in the cluster.Schema Custom schema to use to process the data. Enter the schema in JSON or DDL format, depending on the selected schema mode.

Error Handling Determines how the stage handles errors when a field in the JSON object is not compatible with the data type defined in the schema: - Permissive - Passes the field with a null value to the output record and continues processing.

- Fail Fast - Stops the pipeline with an error.

Note: The Error Handling property requires that Apache Spark version 3.0.0 or later is installed on the Transformer machine and on each node in the cluster. For earlier Spark versions, when a field is not compatible with the data type defined in the schema, the processor ignores the field, dropping it from the output record. - Infer from Data