Pipeline Statistics

A Control Hub job defines the pipeline to run and the Data Collectors that run the pipeline. When you start a job, Control Hub remotely runs the pipeline on the group of Data Collectors. To monitor the job statistics and metrics within Control Hub, you must configure the pipeline to write statistics to Control Hub or to another system.

When a job runs on a single Data Collector, you can simply configure the pipeline to write the statistics directly to Control Hub. By default, pipelines are configured to write statistics directly to Control Hub.

When a job runs on multiple Data Collectors, a remote pipeline instance runs on each of the Data Collectors. To view aggregated statistics for the job within Control Hub, you must configure the pipeline to write the statistics to one of the following systems:

- SDC RPC

- Kafka cluster

- Amazon Kinesis Streams

- MapR Streams

When you start a job that includes a pipeline configured to write to Kafka, Kinesis, MapR Streams, or SDC RPC, Control Hub automatically generates and runs a system pipeline for the job. The system pipeline reads the statistics written by each running pipeline instance to Kafka, Kinesis, MapR Streams, or SDC RPC. Then, the system pipeline aggregates and sends the statistics to Control Hub.

When a Control Hub job includes a pipeline that is configured to write statistics, you can view the statistics and metrics when you monitor the job in Control Hub:

When a Control Hub job includes a pipeline that is configured to discard statistics, Control Hub cannot display statistics and metrics for the job. Instead, Control Hub displays the following warning message when you monitor the job:

Aggregated metrics for the job are not available as individual pipeline metrics are discarded.

Pipeline Execution Mode

Pipelines can run in standalone, cluster, or edge execution mode. Some pipeline execution modes do not support all statistics aggregator options.

Write Statistics Directly to Control Hub

When you write statistics directly to Control Hub, Control Hub does not generate a system pipeline for the job. Instead, the Data Collector directly sends the statistics to Control Hub.

Write statistics directly to Control Hub when the job runs on a single Data Collector. If the job runs on multiple Data Collectors, Control Hub can display the pipeline statistics for each individual Data Collector. However, Control Hub cannot display an aggregated view of the statistics across all running pipeline instances.

Write Statistics to SDC RPC

When you write statistics to SDC RPC, Data Collector effectively adds an SDC RPC destination to the pipeline that you are configuring. Control Hub automatically generates and runs a system pipeline for the job. The system pipeline is a pipeline with a Dev SDC RPC with Buffering origin that reads the statistics passed from the SDC RPC destination, and then aggregates and sends the statistics to Control Hub.

Write statistics to SDC RPC when the job runs on multiple Data Collectors.

- SDC RPC connection - The host and port number of the Data Collector machine where Control Hub starts the system pipeline. The host must be a Data Collector machine registered with Control Hub that can run a pipeline for the job. A Data Collector can run the pipeline when it has all labels associated with the job.

For example, if you associate the job with the WestCoast label, then the host specified in the RPC connection must be a machine with a registered Data Collector that also has the WestCoast label.

- SDC RPC ID - A user-defined identifier that allows SDC RPC stages to recognize each other. To avoid mixing statistics from different jobs, use a unique ID for each job.

You can optionally enable encryption to pass data securely and define retry and timeout properties.

For more information about SDC RPC pipelines, see SDC RPC Pipeline Overview.

Best Practices for SDC RPC

- To avoid mixing statistics from different jobs, use a unique SDC RPC ID for each job.

- Monitor the disk space where the Dev SDC RPC with Buffering origin in the system

pipeline temporarily buffers the records to disk before passing the records to

the next stage in the pipeline.

The Dev SDC RPC with Buffering origin in the system pipeline temporarily buffers the statistics to a queue on disk. If the system pipeline slows, the temporary location on disk might become full. The temporary statistics are written to the location specified in the java.io.tmpdir system property, to a file with the following name:

sdc-fragments<file ID>.queueFile

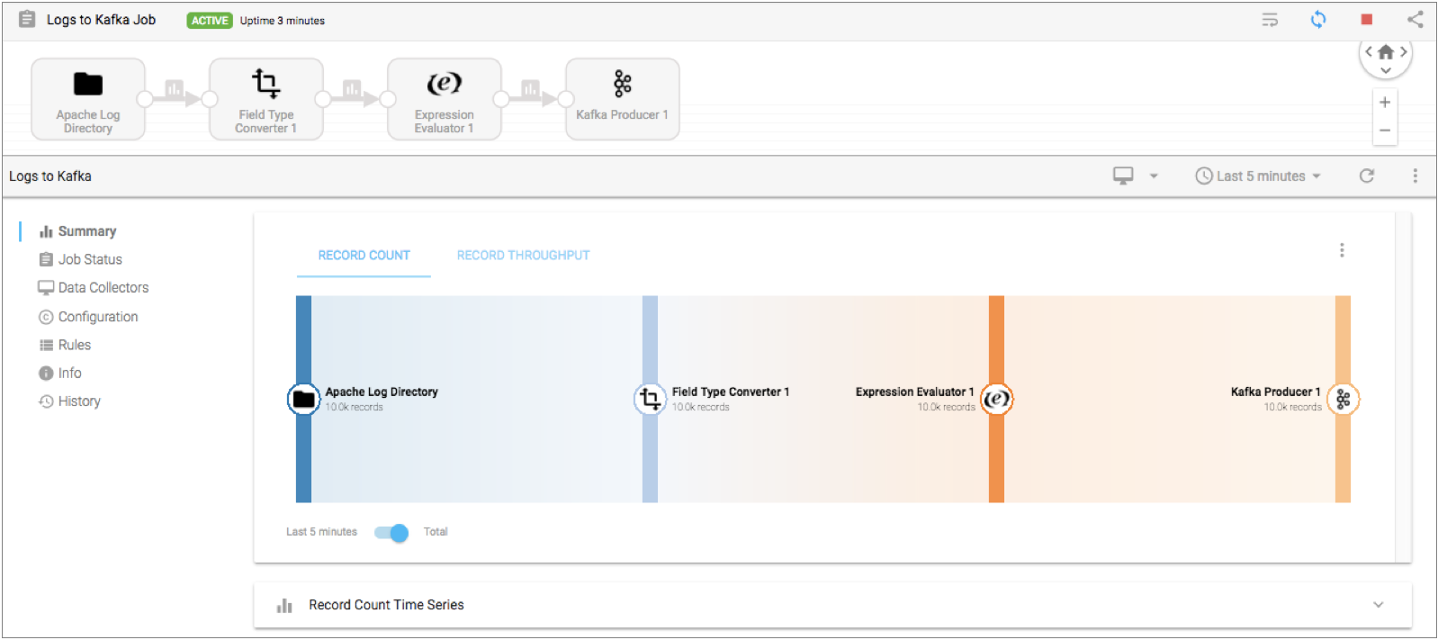

Write Statistics to Kafka

When you write statistics to a Kafka cluster, Data Collector effectively adds a Kafka Producer destination to the pipeline that you are configuring. Control Hub automatically generates and runs a system pipeline for the job. The system pipeline reads the statistics from Kafka, and then aggregates and sends the statistics to Control Hub.

Write statistics to a Kafka cluster when the job runs on multiple Data Collectors.

When you write statistics to a Kafka cluster, you define connection information and the topic to write to.

You also configure the partition strategy. The pipeline passes data to partitions in the Kafka topic based on the partition strategy that you choose. You can add additional Kafka configuration properties as needed.

Partition Strategy

The partition strategy determines how to write statistics to Kafka partitions. You can use a partition strategy to balance the work load or to write data semantically.

The pipeline can use one of the following partition strategies:

- Round-Robin

- Writes statistics to a different partition using a cyclical order. Use for load balancing.

- Random

- Writes statistics to a different partition using a random order. Use for load balancing.

- Expression

- Writes statistics to a partition based on the results of the partition expression. Use to perform semantic partitioning.

- When you configure the partition expression, define the expression to evaluate to the partition where you want statistics written.

- Default

- Writes statistics using the default partition strategy that Kafka provides.

Best Practices for a Kafka Cluster

Consider the following best practices when you configure a pipeline to write statistics to a Kafka cluster:

- To avoid mixing statistics from different jobs, use a unique topic name for each job.

- Consider the Kafka retention policy.

Each running pipeline instance writes statistics to Kafka, and then the system pipeline consumes the statistics from Kafka. If the system pipeline unexpectedly shuts down, Kafka retains the statistics for the amount of time determined by the Kafka retention policy. If the system pipeline is down for longer than Kafka retains data, the statistics are lost.

Write Statistics to Kinesis Streams

When you write statistics to Amazon Kinesis Streams, Data Collector effectively adds a Kinesis Producer destination to the pipeline that you are configuring. Control Hub automatically generates and runs a system pipeline for the job. The system pipeline reads the statistics from Kinesis Streams, and then aggregates and sends the statistics to Control Hub.

Write statistics to Kinesis Streams when the job runs on multiple Data Collectors.

When you write statistics to Kinesis Streams, you define connection information and the stream to write to.

You also configure the partition strategy. The pipeline passes data to partitions in Kinesis shards based on the partition strategy that you choose. You can add additional Kinesis configuration properties as needed.

AWS Credentials

When the pipeline writes aggregated statistics to Amazon Kinesis Streams, it must pass credentials to Amazon Web Services.

Use one of the following methods to pass AWS credentials:

- IAM roles

- When Data Collector runs on an Amazon EC2 instance, you can use the AWS Management Console to configure an IAM role for the EC2 instance. Data Collector uses the IAM instance profile credentials to automatically connect to AWS.

- When you use IAM roles, you do not need to specify the Access Key ID and Secret Access Key properties when you configure statistics for the pipeline.

- For more information about assigning an IAM role to an EC2 instance, see the Amazon EC2 documentation.

- AWS access key pairs

-

When Data Collector does not run on an Amazon EC2 instance or when the EC2 instance doesn’t have an IAM role, you must specify the Access Key ID and Secret Access Key properties when you configure statistics for the pipeline.

Best Practices for Kinesis Streams

Consider the following best practices when you configure a pipeline to write statistics to Amazon Kinesis Streams:

- To avoid mixing statistics from different jobs, use a unique stream name for each job.

- Consider the Kinesis Streams retention policy.

Each running pipeline instance writes statistics to Kinesis Streams, and then the system pipeline reads the statistics from Kinesis Streams. If the system pipeline unexpectedly shuts down, Kinesis Streams retains the statistics for the amount of time determined by the Kinesis Streams retention policy. If the system pipeline is down for longer than Kinesis Streams retains data, the statistics are lost.

Write Statistics to MapR Streams

When you write statistics to MapR Streams, Data Collector effectively adds a MapR Streams Producer destination to the pipeline that you are configuring. Control Hub automatically generates and runs a system pipeline for the job. The system pipeline reads the statistics from MapR Streams, and then aggregates and sends the statistics to Control Hub.

Write statistics to MapR Streams when the job runs on multiple Data Collectors.

When you write statistics to MapR Streams, you define the topic to write to. You also configure the partition strategy. The pipeline passes data to partitions in the MapR Streams topic based on the partition strategy that you choose. You can add additional MapR Streams configuration properties as needed.

Before you can write statistics to MapR Streams, you must perform additional steps to enable Data Collector to process MapR data. For more information, see MapR Prerequisites.

Partition Strategy

The partition strategy determines how to write statistics to MapR Streams partitions. You can use a partition strategy to balance the work load or to write data semantically.

The pipeline can use one of the following partition strategies:

- Round-Robin

- Writes each record to a different partition using a cyclical order. Use for load balancing.

- Random

- Writes each record to a different partition using a random order. Use for load balancing.

- Expression

- Writes each record to a partition based on the results of the partition expression. Use to perform semantic partitioning.

- When you configure the partition expression, define the expression to evaluate to the partition where you want each record written. The expression must return a numeric value.

- Default

- Writes each record using the default partition strategy that MapR Streams provides.

Best Practices for MapR Streams

Consider the following best practices when you configure a pipeline to write statistics to MapR Streams:

- To avoid mixing statistics from different jobs, use a unique topic name for each job.

- Consider the MapR Streams retention policy.

Each running pipeline instance writes statistics to MapR Streams, and then the system pipeline consumes the statistics from MapR Streams. If the system pipeline unexpectedly shuts down, MapR Streams retains the statistics for the amount of time determined by the MapR Streams retention policy. If the system pipeline is down for longer than MapR Streams retains data, the statistics are lost.

Configuring a Pipeline to Write Statistics

You can configure a pipeline to write statistics after the Data Collector has been registered with Control Hub.